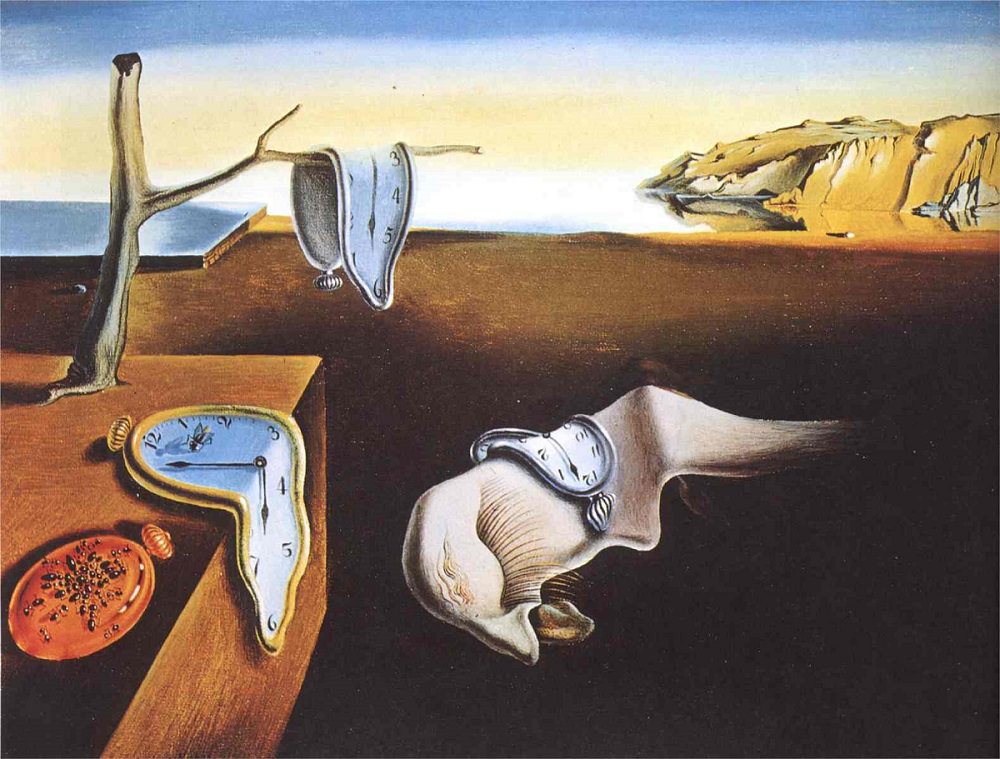

The Innovator’s Myopia

Many product organization suffer from acute myopia. Once a product is sold or installed in the field, they lose sight of its performance, how users are interacting with it, and how well it supports the brand.

Of course, organizations do get some feedback from customers and field operations from time to time. But this information usually comes in the form of bad news: customer complaints, excessive warranty claims, and costly product replacements and repairs.

Upon careful observation, we should realize that organizational myopia doesn’t set during product deployment. It usually starts much earlier, when product marketing defines market needs and functional requirements for a new promising product.

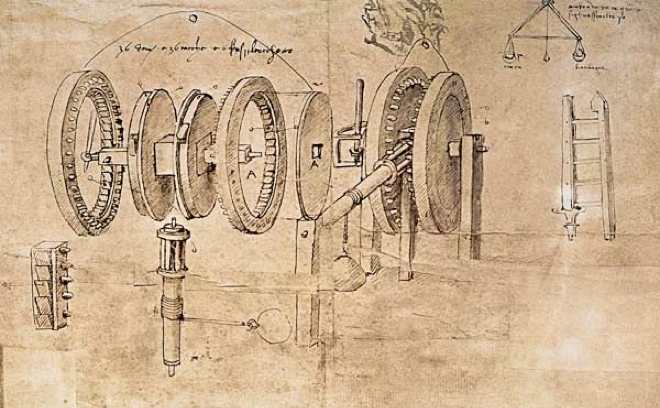

Products are frequently defined and designed based on inaccurate, out of date, and biased perceptions about customer needs and competitive landscape. Product organizations are highly optimistic about customers enthusiasm to cope with yet another “disruptive” technology. And product designers often lack sufficient understanding of existing workflows and process integration requirements.

No wonder most new products fail. Read More